Each service can be fully contained and scaled independently from others with the help of an orchestrator tool such as Kubernetes, which reduces resource overhead on applications with features that aren't as heavily used as others. Containers offer more benefits for distributed applications - particularly microservices - than for larger, monolithic ones. Over time, container vendors have addressed security and management issues with tool updates, additions, acquisitions and partnerships, although that doesn't mean containers are perfect in the 2020s.Ĭloud container management, accompanied by the necessary monitoring, logging and alert technology, is an active area for container-adopting organizations.

This was a major development for Microsoft shops that wanted to containerize applications and stay compatible with their existing systems. In April 2017, Microsoft enabled organizations to run Linux containers on Windows Server.

Companies such as Pivotal, Rancher, AWS and even Docker changed gears to support the open source Kubernetes container scheduler and orchestration tool, cementing its position as the default container orchestration technology. Efficient networking also posed problems, as well as the logistics of regulatory compliance and distributed application management.Ĭontainer technology ramped up in 2017. A vulnerable container could result in a vulnerable ecosystem without the right precautions baked into the container technology.Īdditional complaints early in the modern evolution of containers bemoaned the lack of data persistence, which is important to the vast majority of enterprise applications. VMs must also each encapsulate a fully independent OS and other resources, while containers share the same OS kernel and use a proxy system to connect to the resources they need, depending on where those resources are located.Ĭoncern and hesitation arose in the IT community regarding the security of a shared OS kernel. By the time Docker 1.0 was released in 2014, the software had been downloaded 2.75 million times, and within a year after that, more than 100 million.Ĭompared with VMs, containers have a significantly smaller resource footprint, are faster to spin up and down, and require less overhead to manage. Within a month of its first test release, Docker was the playground for 10,000 developers.

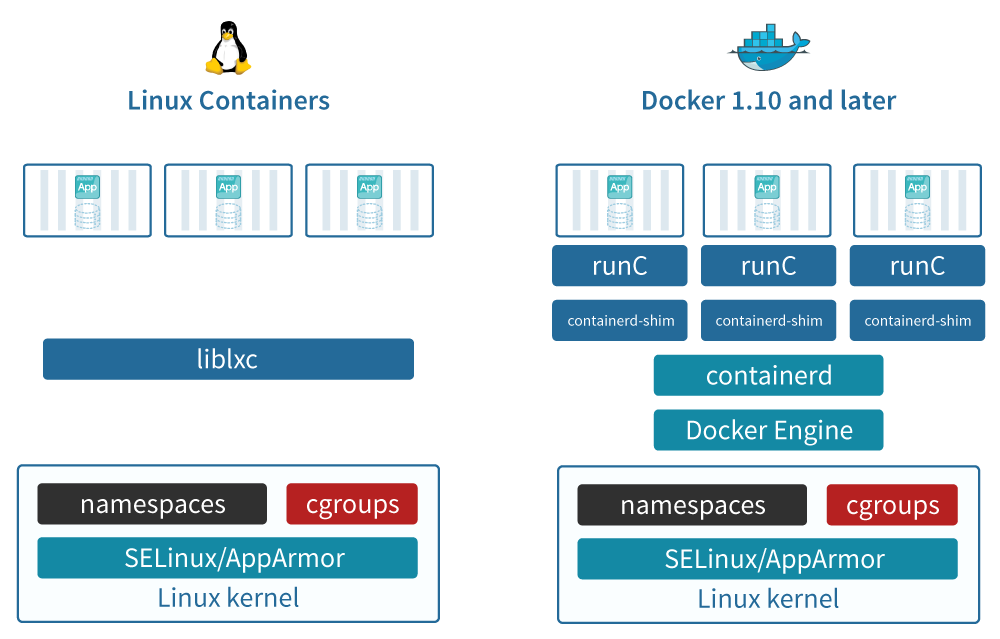

Because Docker enabled multiple applications with different OS requirements to run on the same OS kernel in containers, IT admins and organizations saw opportunity for simplification and resource savings. Namespaces developed shortly thereafter to provide the basis for container network security: to hide a user's or group's activity from others.ĭocker floated onto the scene in 2013 with an easy-to-use GUI and the ability to package, provision and run container technology. The cgroup concept was absorbed into the Linux kernel in January 2008, after which the Linux container technology LXC emerged. Cgroups noted the relationships between processes and reined in user access to specific activities and memory volumes. This led to the development of process containers, which became control groups, or cgroups, as early as 2004. Nevertheless, this kind of container technology could only go so far. Anyone could see what was going on inside the machine, which enabled a system of accounting for who was using the most memory and how to make the system perform better. In those early days of the evolution of containers, security wasn't much of a concern. It relied on the isolation mechanisms that Linux already had in place. Google introduced Borg, the organization's container cluster management system, in 2003. The 2000s were alight with container technology development and refinement.

A key benefit of chroot separation was improved system security, such that an isolated environment could not compromise external systems if an internal vulnerability was exploited. Chroot marked the beginning of container-style process isolation by restricting an application's file access to a specific directory - the root - and its children. The evolution of containers leaped forward with the development of chroot in 1979 as part of Unix version 7. The modern VM serves a variety of purposes, such as installing multiple OSes on one machine to host multiple applications with specific OS requirements that differ from each other. The following decades were marked by widespread VM use and development. VM partitioning, which dates to the 1960s, enables multiple users to access a computer concurrently with full resources via a singular application each.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed